RESEARCH

My research interests are in

medical image analysis, computer vision, artificial

intelligence, and computer graphics. My current and future

research might provide interdisciplinary foundation from

collaborations with researchers in life science, pathology,

radiology, bio-nanotechnology, human-computer interaction, and

visualization in virtual reality environment.

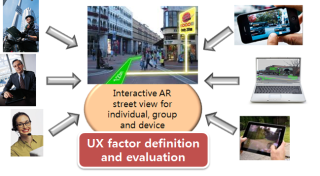

Development of MIMO HCI SW Technology for Active Intentional

Cognition and Response of Multiple UsersThe final research

goals are as follows:1. Development of core SW technology for

novel MIMO HCI controling multiple computing devices and

recognizing conscious/unconscious multiple users' intent2.

Development of core SW for

acquisition/processing/representation/transcoding/convergence

of multiple users' multiple information and information

exchange among multiple computing devices

3D reconstruction technology

development for scene of car accident using multi view black

box image

Device and

User-purpose Oriented Contents Reconstruction/Re-augmentation

Software Framework from Massive-scale Streetview

Information

We propose an integration framework based on information

analysis, hierarchical data representation and reconstruction

techniques on massive-scale multimedia-spatial data. Our

research sub-goals include the followings: Hyper-perspective

panoramic image construction technique, object type data

reconstruction, interactive re-augmentation technique,

user-centric, device optimized data representation technique,

and large-scale multimedia data processing technique.

Content based video

assesment based on color and optical flow pattern

analyses

The main goal of the proposed research is in quantifying the

factors that can be potentially harmful for video observers

during watching video streams from camera input, computer

games, and video contents. Previous work mainly focused on

clinical trials about why and how people feel simulation

sickness. In this research, we propose a contents based

analysis framework that can efficiently quantify the factors

about causing simulation sickness. In particular, we tackle the

problem by analyzing color distribution and optical flows in

consecutive video frames.

3D Volume

Reconstruction from Confocal Laser Scanning Microscopy

Imagery

(Joint work with Department of

Pathology, University of Illinois at Chicago)

We study a three-dimensional volume

reconstruction framework which consists of volume

reconstruction procedures using multiple automation levels,

feature types, and feature dimensionalities, a data-driven

registration decision support system, an evaluation study of

registration accuracy, and a novel intensity enhancement

technique for 3D CLSM volumes.

The motivation for developing the framework came from the lack

of 3D volume reconstruction techniques for CLSM image modality.

The 3D volume reconstruction problem is challenging due to

significant variations of intensity and shape of cross

sectioned structures, unpredictable and inhomogeneous

geometrical warping during medical specimen preparation, and an

absence of external fiduciary markers. The framework addresses

the problem of automation in the presence of the above

challenges as they are frequently encountered during CLSM-based

3D volume reconstructions used for cell biology

investigations.

The broader impact of the presented work is in providing the

algorithms in a form of web-enabled tools to the medical

community so that medical researchers can minimize laborious

and time intensive 3D volume reconstructions using the tools

and computational resources at NCSA.

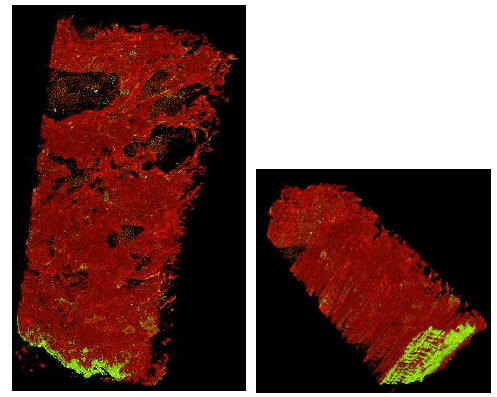

3D Volume Reconstruction of Extracellular Matrix

Proteins in Uveal Melanoma

We developed a method for the

three-dimensional reconstruction of volume for these

extravascular matrix proteins from serial paraffin sections cut

at 4 µm thicknesses and stained with a fluorescent-labeled

antibody to laminin. Each section was examined with confocal

laser-scanning focal microscopy (CLSM) and 13 images were

recorded in the Z-dimension for each slide. The input CLSM

imagery is composed of a set of 3D sub-volumes (stacks of 2D

images) acquired at multiple confocal depths, from a sequence

of consecutive slides. Steps for automated reconstruction

process included (1) unsupervised methods for selecting an

image frame from a sub-volume based on entropy and contrast

criteria, (2) a fully automated registration technique for

image alignment, and (3) an improved histogram equalization

method that compensates for spatially varying image intensities

in CLSM imagery due to photo-bleaching. We compared image

alignment accuracy of a fully automated method with

registration accuracy achieved by human subjects using a manual

method. In this study, automated 3D volume reconstruction

provided significant improvement in accuracy, consistency of

results, and performance time for CLSM images acquired from

serial paraffin sections. We developed a method for the

three-dimensional reconstruction of volume for these

extravascular matrix proteins from serial paraffin sections cut

at 4 µm thicknesses and stained with a fluorescent-labeled

antibody to laminin. Each section was examined with confocal

laser-scanning focal microscopy (CLSM) and 13 images were

recorded in the Z-dimension for each slide. The input CLSM

imagery is composed of a set of 3D sub-volumes (stacks of 2D

images) acquired at multiple confocal depths, from a sequence

of consecutive slides. Steps for automated reconstruction

process included (1) unsupervised methods for selecting an

image frame from a sub-volume based on entropy and contrast

criteria, (2) a fully automated registration technique for

image alignment, and (3) an improved histogram equalization

method that compensates for spatially varying image intensities

in CLSM imagery due to photo-bleaching. We compared image

alignment accuracy of a fully automated method with

registration accuracy achieved by human subjects using a manual

method. In this study, automated 3D volume reconstruction

provided significant improvement in accuracy, consistency of

results, and performance time for CLSM images acquired from

serial paraffin sections.

The distribution of looping

patterns of laminin in uveal melanomas and other tumors has

been associated with adverse outcome. Moreover, these patterns

are generated by highly invasive tumor cells through the

process of vasculogenic mimicry and are not therefore blood

vessels. Nevertheless, these extravascular matrix patterns

conduct plasma. The three-dimensional configuration of these

laminin-rich patterns compared with blood vessels has been the

subject of speculation and intensive investigation.

Accuracy Evaluation for Region Centroid-Based

Registration

We

introduce an accuracy evaluation of a semi-automatic

registration technique for 3D volume reconstruction from

fluorescent confocal laser scanning microscope (CLSM) imagery.

The presented semi-automatic method is designed based on our

observations that (a) an accurate point selection is much

harder than an accurate region (segment) selection for a human,

(b) a centroid selection of any region is less accurate by a

human than by a computer, and (c) registration based on

structural shape of a region rather than based on

intensity-defined point is more robust to noise and to

morphological deformation of features across stacks. We applied

the method to image mosaicking and image alignment registration

steps and evaluated its performance with 20 human subjects on

CLSM images with stained blood vessels. Our experimental

evaluation showed significant benefits of automation for 3D

volume reconstruction in terms of achieved accuracy,

consistency of results and performance time. In addition, the

results indicate that the differences between registration

accuracy obtained by experts and by novices disappear with the

proposed semi-automatic registration technique while the

absolute registration accuracy increases. We

introduce an accuracy evaluation of a semi-automatic

registration technique for 3D volume reconstruction from

fluorescent confocal laser scanning microscope (CLSM) imagery.

The presented semi-automatic method is designed based on our

observations that (a) an accurate point selection is much

harder than an accurate region (segment) selection for a human,

(b) a centroid selection of any region is less accurate by a

human than by a computer, and (c) registration based on

structural shape of a region rather than based on

intensity-defined point is more robust to noise and to

morphological deformation of features across stacks. We applied

the method to image mosaicking and image alignment registration

steps and evaluated its performance with 20 human subjects on

CLSM images with stained blood vessels. Our experimental

evaluation showed significant benefits of automation for 3D

volume reconstruction in terms of achieved accuracy,

consistency of results and performance time. In addition, the

results indicate that the differences between registration

accuracy obtained by experts and by novices disappear with the

proposed semi-automatic registration technique while the

absolute registration accuracy increases.

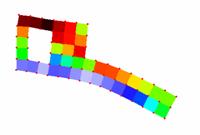

Three-dimensional Volume Reconstruction Based on

Trajectory Fusion

We address the

problem of 3D volume reconstruction from depth adjacent

sub-volumes (i.e., sets of image frames) acquired using

confocal laser scanning microscopy (CLSM). Our goal is to align

sub-volumes by estimating an optimal global image

transformation which preserves morphological smoothness of

medical structures (called features, for instance blood

vessels) inside of a reconstructed 3D volume. We approached the

problem by learning morphological characteristics of structures

inside of each sub-volume (e. g., centroid trajectories of

features). Next, adjacent sub-volumes are aligned by fusing the

morphological characteristics of structures using

ex-trapolation and model fitting. Finally, a global sub-volume

to sub-volume transformation is computed based on the entire

set of fused structures. The trajectory-based 3D volume

reconstruction method described here is evaluated with four

consecutive physical sections and using two metrics for

morphological continuity. We address the

problem of 3D volume reconstruction from depth adjacent

sub-volumes (i.e., sets of image frames) acquired using

confocal laser scanning microscopy (CLSM). Our goal is to align

sub-volumes by estimating an optimal global image

transformation which preserves morphological smoothness of

medical structures (called features, for instance blood

vessels) inside of a reconstructed 3D volume. We approached the

problem by learning morphological characteristics of structures

inside of each sub-volume (e. g., centroid trajectories of

features). Next, adjacent sub-volumes are aligned by fusing the

morphological characteristics of structures using

ex-trapolation and model fitting. Finally, a global sub-volume

to sub-volume transformation is computed based on the entire

set of fused structures. The trajectory-based 3D volume

reconstruction method described here is evaluated with four

consecutive physical sections and using two metrics for

morphological continuity.

Intensity Correction

of Fluorescent Confocal Laser Scanning Microscope Images by

Mean-Weight Filtering

We addresses the problem of

intensity correction of fluorescent confocal laser scanning

microscope (CLSM) images. CLSM images are frequently used in

medical domain for obtaining 3D information about specimen

structures by imaging a set of 2D cross sections and performing

3D volume reconstruction afterwards. However, 2D images

acquired from fluorescent CLSM images demonstrate significant

intensity heterogeneity, for example, due to photo-bleaching

and fluorescent attenuation in depth. We developed an intensity

heterogeneity correction technique that (a) adjusts intensity

heterogeneity of 2D images, (b) preserves fine structural

details, and (c) enhances image contrast, by performing

spatially adaptive mean-weight filtering. Our solution is

obtained by formulating an optimization problem, followed by

filter design and automated selection of filtering parameters.

The proposed filtering method is experimentally compared with

several existing techniques by using four quality metrics, such

as contrast, intensity heterogeneity (entropy) in low frequency

domain, intensity distortion in high frequency domain, and

saturation. Based on our experiments and the four quality

metrics, the developed mean-weight filtering outperforms other

intensity correction methods by at least a factor of 1.5 when

applied to fluorescent CLSM images. We addresses the problem of

intensity correction of fluorescent confocal laser scanning

microscope (CLSM) images. CLSM images are frequently used in

medical domain for obtaining 3D information about specimen

structures by imaging a set of 2D cross sections and performing

3D volume reconstruction afterwards. However, 2D images

acquired from fluorescent CLSM images demonstrate significant

intensity heterogeneity, for example, due to photo-bleaching

and fluorescent attenuation in depth. We developed an intensity

heterogeneity correction technique that (a) adjusts intensity

heterogeneity of 2D images, (b) preserves fine structural

details, and (c) enhances image contrast, by performing

spatially adaptive mean-weight filtering. Our solution is

obtained by formulating an optimization problem, followed by

filter design and automated selection of filtering parameters.

The proposed filtering method is experimentally compared with

several existing techniques by using four quality metrics, such

as contrast, intensity heterogeneity (entropy) in low frequency

domain, intensity distortion in high frequency domain, and

saturation. Based on our experiments and the four quality

metrics, the developed mean-weight filtering outperforms other

intensity correction methods by at least a factor of 1.5 when

applied to fluorescent CLSM images.

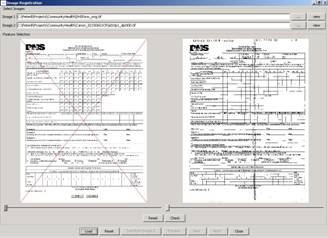

Automated Hand-filled

Form Analysis

The project considers an

automated information extraction from hand-filled forms,

specifically used by the Department of Human Service (DHS),

Illinois. The process includes distribution and re-collection

of the printed forms for traditional hand-written filing, raw

image acquisition of the collected forms, and semi- and fully

automated information extraction for future data mining

support. The automated data extraction from a hand-filled form

is considered as a hard problem unless a standardized form is

used, e.g., teleform. For information extraction without

modifying the existing form, we propose automated image

processing techniques such as robust registration of

geometrically distorted input images, segmentation of the input

fields, and classification of binary answers with high

confidence. The processed data may be stored as a pdf form with

annotations or a plain text form associated with raw images. The project considers an

automated information extraction from hand-filled forms,

specifically used by the Department of Human Service (DHS),

Illinois. The process includes distribution and re-collection

of the printed forms for traditional hand-written filing, raw

image acquisition of the collected forms, and semi- and fully

automated information extraction for future data mining

support. The automated data extraction from a hand-filled form

is considered as a hard problem unless a standardized form is

used, e.g., teleform. For information extraction without

modifying the existing form, we propose automated image

processing techniques such as robust registration of

geometrically distorted input images, segmentation of the input

fields, and classification of binary answers with high

confidence. The processed data may be stored as a pdf form with

annotations or a plain text form associated with raw images.

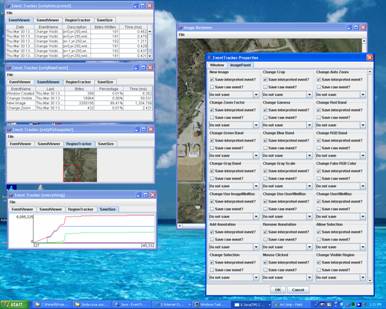

Data Integration and

Information Gathering about Decision Processes using Geospatial

Electronic Records

The size, complexity and

heterogeneity of geospatial collections pose formidable

challenges for the National Archives in terms of high

performance data storage, access, integration, analysis and

visualization. These challenges become even more eminent when

temporal aspects of data processing are involved as in the

context of high assurance decision making and high confidence

application scenarios. As part of this project, we address the

problems of gathering, archiving and analyzing information

about decision-making processes using geospatial electronic

records (e-records). The ultimate goal of our research is to

understand the cost of information archival using the cutting

edge technologies, high performance computing and novel

computer architectures. The size, complexity and

heterogeneity of geospatial collections pose formidable

challenges for the National Archives in terms of high

performance data storage, access, integration, analysis and

visualization. These challenges become even more eminent when

temporal aspects of data processing are involved as in the

context of high assurance decision making and high confidence

application scenarios. As part of this project, we address the

problems of gathering, archiving and analyzing information

about decision-making processes using geospatial electronic

records (e-records). The ultimate goal of our research is to

understand the cost of information archival using the cutting

edge technologies, high performance computing and novel

computer architectures.

Network for Earthquake

Engineering Simulation (NEES)

(Joint work with Department of

Civil and Environmental Engineering, University of Illinois at

Urbana-Champaign)

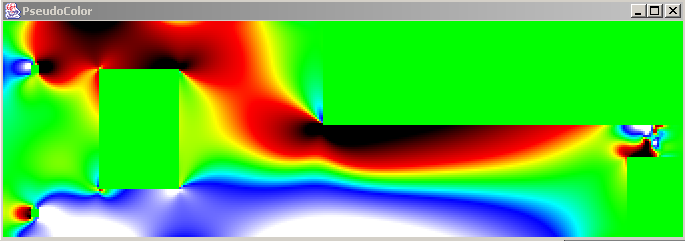

We

address the problem of multi-instrument analysis of point and

raster data. An image (raster data) is one of the most popular

data in many applications due to its low cost and flexibility.

However, when using image data only, many applications may face

other problems, such as camera calibration, limited field of

view and resolution and low accuracy comparing with contact

sensors. In addition to image data, our research includes

point-based sensors that are being used to obtain more accurate

measurements in terms of spatial sampling and value

precision. We

address the problem of multi-instrument analysis of point and

raster data. An image (raster data) is one of the most popular

data in many applications due to its low cost and flexibility.

However, when using image data only, many applications may face

other problems, such as camera calibration, limited field of

view and resolution and low accuracy comparing with contact

sensors. In addition to image data, our research includes

point-based sensors that are being used to obtain more accurate

measurements in terms of spatial sampling and value

precision.

The measurement process with

multiple sensors poses several challenges on multi-instrument

data analysis, for example, sensor registration, data

interpolation, variable transformation, data overlay and

spatial measurement evaluation. We will present a method of

sensing, data conversion and data verification and describe our

preliminary results from the on-going research investigating

large material structures. Our work is part of the National

Earthquake Engineering Simulation (NEES) project and is

conducted in the collaboration with the Civil and Environmental

Engineering Department, UIUC. The measurement process with

multiple sensors poses several challenges on multi-instrument

data analysis, for example, sensor registration, data

interpolation, variable transformation, data overlay and

spatial measurement evaluation. We will present a method of

sensing, data conversion and data verification and describe our

preliminary results from the on-going research investigating

large material structures. Our work is part of the National

Earthquake Engineering Simulation (NEES) project and is

conducted in the collaboration with the Civil and Environmental

Engineering Department, UIUC.

Autonomous MobileRobot

Navigation using Omni-directional Vision

We addresses the problem of

systematic exploration of an unfamiliar world environment to

build a qualitative map by searching for recognizable targets.

Generated maps are essential for further mobile robot control,

self-localization, and path planning. While exploring, a map is

constructed which contains a set of regions of free-space

delineated by recognizable targets (landmarks) and the

connectivity (adjacency and overlap) of these regions. Within a

region, the robot can freely navigate without collision and

reference its position with respect to at least three

landmarks. This is achieved by exploiting the visibility

constraint -- if a landmark is visible to a robot, there are no

obstacles on the line segment between the robot and the

landmark. As the robot moves along a trajectory while tracking

a landmark, a region of free-space is swept out. By

representing a collection of free regions and their

region-to-region connectivity as a graph, path planning amounts

to graph sarch and execution of the plan by a robot amounts to

local movements to enter into the next free region. Both for

exploration and for navigation, no metric information about the

robot's path nor absolute coordinates of its position or of

landmark locations are required or recorded. After exploring,

the robot produces a compact map which covers a large area, and

provides information for fast self-localization and flexible

path planning. We addresses the problem of

systematic exploration of an unfamiliar world environment to

build a qualitative map by searching for recognizable targets.

Generated maps are essential for further mobile robot control,

self-localization, and path planning. While exploring, a map is

constructed which contains a set of regions of free-space

delineated by recognizable targets (landmarks) and the

connectivity (adjacency and overlap) of these regions. Within a

region, the robot can freely navigate without collision and

reference its position with respect to at least three

landmarks. This is achieved by exploiting the visibility

constraint -- if a landmark is visible to a robot, there are no

obstacles on the line segment between the robot and the

landmark. As the robot moves along a trajectory while tracking

a landmark, a region of free-space is swept out. By

representing a collection of free regions and their

region-to-region connectivity as a graph, path planning amounts

to graph sarch and execution of the plan by a robot amounts to

local movements to enter into the next free region. Both for

exploration and for navigation, no metric information about the

robot's path nor absolute coordinates of its position or of

landmark locations are required or recorded. After exploring,

the robot produces a compact map which covers a large area, and

provides information for fast self-localization and flexible

path planning.

|

![]()